Electricity

Related Topics:

More Lessons for High School Physics

Math Worksheets

A series of free Online High School Physics Video Lessons.

In this lesson, we will learn

- Electric Current

- Resistance

- Potential Difference

- Ohm's Law

Electric Current

Electric current is the rate of charge flow as charge moves through a conductor. Current is conventionally taken to be in the direction of positive charge flow. Current is caused by differences in electric potential and always flows from high to low.Charge flow is given in 1 coulomb/second or 1 Ampere.

Current = charge passing a given point / time.

Understanding electric current.

Define and calculate electric current

Resistance

Resistance is the relationship between potential difference and electric current generated. High resistance needs a large potential difference to generate an appreciable current. Electrons will generally flow through the path with the least resistance.How resistors affect electric current.

Define conductivity, resistivity and resistance.

Explain the factors and calculate the resistance of a conductor.

Potential Difference

Potential difference is what we use to generate current and to make it do what we want it to. Batteries serve as a constant source of potential difference in order to force the current to flow.Understanding potential difference.

Define and calculate electric potential energy.

Define and calculate potential difference.

Utilize electron-volts as a unit of energy for very small charges moving through a potential difference.

Ohm's Law

Ohm's law defines a concrete relationship between potential difference and current flow. This relationship is satisfied by all ohmic materials according to the equationpotential difference = current x resistance.

How to use Ohm's law to relate potential difference and current flow.

Define and calculate resistance, current, and potential difference using Ohm's Law

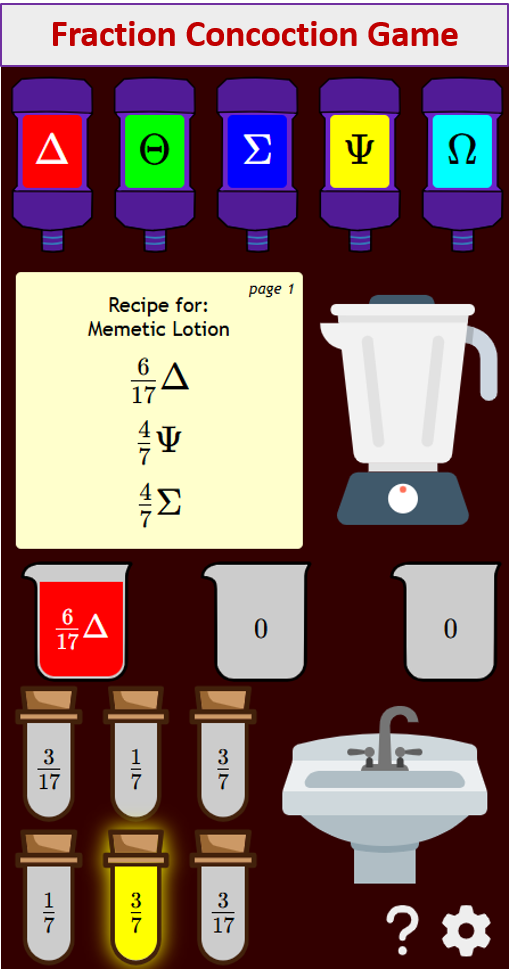

Try out our new and fun Fraction Concoction Game.

Add and subtract fractions to make exciting fraction concoctions following a recipe. There are four levels of difficulty: Easy, medium, hard and insane. Practice the basics of fraction addition and subtraction or challenge yourself with the insane level.

We welcome your feedback, comments and questions about this site or page. Please submit your feedback or enquiries via our Feedback page.